Editor’s Note: This was first posted on December 18, 2013.

The holiday seasons of 2012 and 2013 in the education world have been dominated by the release of new international test-score data, and the accompanying hand-wringing about the performance of the U.S., with advocates of every stripe finding a high-performing country with existing policies that match what they always thought the U.S. ought to do. Here at the Chalkboard we often take on the dangers of analyses that draw causal conclusions from correlational data, particularly when the analyst is free to keep mining the data until the desired pattern is revealed. There are certainly many real examples that illustrate this important point, but today I’d like to illustrate it with a frivolous example about the Yuletide, based on real data and analyses.

With one week to go before Christmas, most Americans are too busy going to holiday parties and shopping for last-minute gifts to worry about college ratings, teacher evaluation systems, and the other education policy issues of the day. Could the festive holiday spirit get in the way of putting students first? Or is it possible that a little holiday magic might increase student achievement?

I can’t prove that Santa is real, but with enough persistence can I come up with a rigorous empirical analysis that measures the causal effect of Christmas on student achievement? I began my search with the recently released PISA data for 2012. Do countries where Christmas is a public holiday, as listed in Wikipedia, score higher than countries where it is not? At first glance, the data do not look good for Kris Kringle. Education systems where Christmas is a public holiday score 3 points lower on PISA than other systems, a statistically insignificant difference.

But then I remembered my colleague Tom Loveless’s warning about how PISA scores for China are likely misleading, with a selected group of kids from a few wealthy provinces included as separate systems and the rest of the country left out of the data. What if I only examine education systems that are entire countries? The situation starts to improve, with Christmas now estimated to raise PISA scores by 22 points! Unfortunately, this difference is still not statistically significant, which may not mean much to a layperson but means a lot to people who once took a statistics course in college.

There must be a better, more accurate, method to measure the effect of Christmas. Perhaps the analysis should be weighted by the inverse of the standard error of the test score for each country, to give more weight to countries with more precise estimates? Bingo! Adding this bit of rigor to the method produces an estimated “Christmas effect” of 48 points on the PISA test, nearly half of a standard deviation—and it is statistically significant at the 5 percent level!

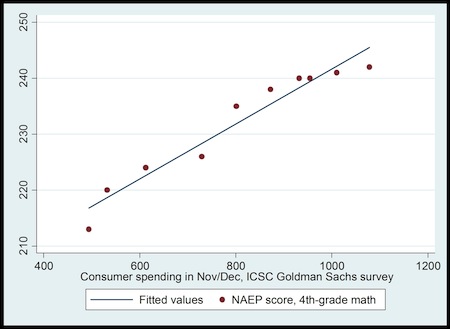

But I knew that there are some grinches out there who will gripe and groan about omitted variable bias, so I needed to confirm that the effect was real by finding it in another data source. A time-series analysis just might do the trick! I gathered NAEP scores on fourth-grade math performance from the US from 1990 to 2013 and linked them to data on how good of a holiday season was had in the previous year (operationalized as consumer spending in November and December as measured by the ICSC-Goldman Sachs index).

Do students learn more in years when there’s more holiday cheer? The figure below shows that they do—student learning rises more or less in lock-step with the amount of holiday spending, a causal effect that is statistically significant at the 1% level. Standardizing the NAEP scores and putting the spending index on a logarithmic scale implies that if we could just have about 30% more holiday spirit, our students would do as well as those in Finland!

Now I know that there will still be some Scrooges among you who will worry that all I’ve documented here are two correlated secular trends, especially because the consumer spending metric is not population-adjusted. Others will complain that I’ve degraded the true meaning of Christmas by measuring it as consumer spending, and that test scores do not fully capture student performance.

But I’d like you to consider the possibility that maybe, just maybe, there’s some holiday magic at work here.

—Matthew M. Chingos

This post originally appeared on the Brown Center Chalkboard blog.