EXECUTIVE SUMMARY

The release of a new report on the effects of School Improvement Grants (SIG), part of the American Recovery and Reinvestment Act aimed at improving the nation’s lowest performing schools, called into question the viability of improving low-performing schools at scale. The report stated that, “Implementing a SIG-funded model had no impact on math or reading test scores, high school graduation, or college enrollment.” A more careful read of the report, however, shows that the research was not able to tell whether the Grants affected any of these outcomes. The effects would have had to be unrealistically large for the study to have been able to detect them. The difference between the report’s conclusion that there was no effect and the more appropriate conclusion that they were not able to detect an effect is an important one, especially in light of state-specific research showing some success of the School Improvement Grant program.

Two studies from California show not only that schools improved student learning outcomes as a result of participating in the SIG program, but also some of the mechanisms by which this improvement occurred. In particular, rich data on SIG schools in one of the studies shows that schools improved both by differentially retaining their most experienced teachers and by providing teachers with increased supports for instructional improvement such as opportunities to visit each other’s classrooms and to receive meaningful feedback on their teaching practice from school leaders.

School improvement is not an easy task. Clearly, many school turnaround efforts have not been successful. Closing low-performing schools and reopening new schools or sending students to other, higher-performing schools has shown positive outcomes for students and may be the most beneficial choice in some cases. However, school closings come with other costs, and recent intensive efforts at improving low-performing schools, such as the School Improvement Grant program, have shown promise. These successes provide direction for states as they seek to implement new programs under ESSA.

A new Elementary and Secondary Act—the Every Student Succeeds Act (ESSA)—and a new administration in Washington are likely to reduce federal oversight and funding for school improvement, but states will still be faced with finding solutions for students in their lowest-performing schools.

While students vary in their academic achievements within schools, low achievement is not at all evenly distributed across schools. It is concentrated in a minority of schools, and these schools typically serve students from low-income families and, often, from non-white families.[i]One set of numbers for New York State finds that 70 percent of low-achieving students were concentrated in 20 percent of schools. Many low-income and non-white students attend schools in which student learning is on par with, or even better than, schools in higher-income areas. However, the lowest performing schools are concentrated in the poorest areas.

Substantial policy effort has focused on improving the educational opportunities for students in these schools, including the Obama administration’s School Improvement Grants (SIG). While solutions to date have been far from perfect, they also have not been uniform failures. Instead they provide direction for continued need to address low performing schools, whether they are traditional public schools or schools of choice.

One approach that has shown positive results is simply closing schools and moving students to other schools or reopening the school with new staff. In the early 2000s, New York City closed 21 low-performing high schools and opened more than 200 hundred small new high schools. A rigorous study found that these changes substantially improved students’ high school graduation rates and other desirable outcomes.[ii] A study of low-performing school closures in Michigan also found positive effects on achievement for students who had previously attended these schools, though also some negative effects on students in the schools receiving students from closed schools.[iii]

School closures are a viable option; however, they are not without problems. Even with potential for longer-term gains, short-term disruptions can negatively affect students and communities already skeptical of low-quality public services. One study that documented students’ understanding of their high school’s closure found that the closing school had provided students with trusting relationships with adults and a sense of belonging, and that the students believed that these and other benefits had not been considered in the decisionmaking to close the school. Students also reported being excluded from the decisionmaking process.[iv] School closings are also often politically difficult, with considerable pushback from teachers and parents. Michelle Rhee’s attempt to close the Washington, DC’s lowest-performing schools when she served as chancellor of DC Public Schools is illustrative. These closings no doubt contributed to end of her leadership in the district and may also have played a part Adrian Fenty’s loss in the mayoral election.[v]

An alternative to school closures is intensive school improvement effort, or whole-school reform. The Obama administration, illustratively, targeted $3.5 billion SIG funds to persistently lowest-achieving (PLA) schools, as part of the American Recovery and Reinvestment Act in 2009. PLA schools were defined as those with baseline achievement in the lowest five percent (based on three-year average proficiency rates) as well as having made the least progress in raising student achievement over the previous five years. To receive funding, targeted schools were required to adopt one of four intervention models beginning in 2011.[vi] The transformation model required replacing the principal, implementing curricular reform, and introducing teacher evaluations based in part on student performance and used in personnel decisions (e.g., rewards, promotions, retentions, and firing). The turnaround model included all of the requirements of the transformation model, as well as replacing at least 50 percent of the staff. The restart model required the school to close and reopen under the leadership of a charter or education management organization. Finally, the closure model simply closed the school.

Two studies focusing on SIG schools in California, the state with the most SIG awards, have found that the program had a meaningful positive effect on student learning in those schools.

The first California study provides the better estimate of the causal effects of the program in the state, by comparing schools that were just eligible for SIG grants under the definition of PLA and those that just missed eligibility. It finds that the reform increased school-average student test performance by roughly a third of the school-level standard deviation. Put in more concrete terms, the average school that was eligible for the SIG grant was approximately 150 points below the state’s performance target of 800 just prior to the reform. The reform led to an increase of 34 scale points, corresponding to closing this gap by 23 percent.[vii] The SIG grants in California averaged approximately $1,500 per pupil, so the program was costly, but the benefits were greater than other popular approaches, such as class size reduction, even on a per-dollar basis.

The second study of SIG schools in California, for which I am an author with Min Sun and Emily Penner, uncovers some of the pathways by which this improvement occurs.[viii] Focusing specifically on one urban school district because of the richness of available data, the study finds positive effects of the program, similar to those for the broader state. Figure 1 shows that, prior to reform, the average math score of students in the SIG schools was substantially below non-SIG schools (more than 80 percent of a standard deviation). After the reform started in the fall of 2010, in obvious contrast to the pre-trend, the mean math achievement raised much more quickly in SIG schools than in non-SIG schools, closing almost one-third of the gap between schools.

While the achievement results are encouraging, schools could have improved results in ways that would be unlikely to benefit students in the long run—such as reclassifying students to exempt them from testing or outright cheating. The rich district data allowed us see that the positive results were reflected in measures of positive school processes as well. Given the importance of teachers to student success, we focused on whether the reforms changed the composition of the teacher workforce and whether they changed the supports that teachers were receiving in their work. Both processes were evident in the SIG schools.

Figure 1. Comparison of trends in student achievement between SIG and non-SIG schools for students who attended these schools in fall 2010.

Math

ELA

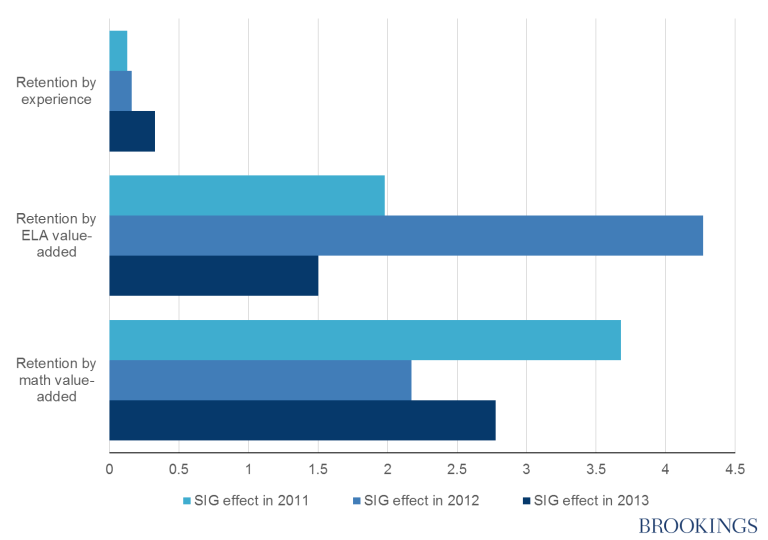

First, SIG schools improved their retention of effective teachers relative to ineffective teachers more than the non-SIG schools. In this case, effectiveness is defined by the teacher’s demonstrated ability to improve student test performance. In contrast, the SIG schools were no more likely to retain more experienced teachers—providing some evidence that they were particularly focused on student learning. Figure 2 illustrates these patterns.

Figure 2: The effects of the SIG reforms on teacher retention. The retention numbers are percent increase in retention of a one standard deviation higher value-added teacher in SIG schools relative to non-SIG schools.

SIG schools not only altered the composition of their teacher workforce, but they also increased the supports provided to teachers. Annual teacher surveys between 2010 and 2013 asked teachers about the frequency of visiting another teacher’s classroom to watch him or her teach; having a colleague observe their classroom; inviting someone in to help their class; going to a colleague to get advice about an instructional challenge they faced; receiving useful suggestions for curriculum material from colleagues; receiving meaningful feedback on their teaching practice from colleagues; receiving meaningful feedback on their teaching practice from their principal; and receiving meaningful feedback on their teaching practice from another school leader (e.g., AP, instructional coach). On a composite measure of their responses, there was no evident change in teacher-reported support after year one of the SIG award, but by the second year, SIG teachers reported significant increases in support (0.26 standard deviations) that grew even greater by in the third year (0.41 standard deviations). Part of the gains in student learning likely stemmed from improvements in the professional opportunities for teachers.

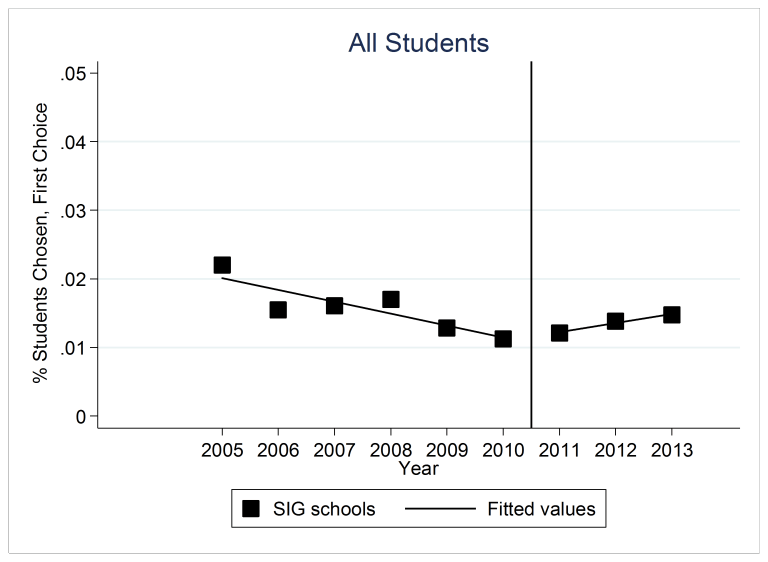

Figure 3. Proportion of students listing a SIG school as their first choice.

Parents recognized the improvement in SIG schools as well. The school district has a full school choice plan in which the vast majority of families choose schools for their children in kindergarten, sixth, and ninth grade. Not surprisingly, SIG schools are not popular schools among families, but as shown in Figure 3, the percent of families that listed a SIG school as their first choice among those submitting choice preferences was decreasing prior to the reform but then began to increase after reform. While still unlikely, the odds that students selected a SIG school as their first choice significantly increased by 31 percent in the first year of reform relative to the odds of making the same choice before the intervention, after accounting for student characteristics, distance from their home to the school, and school fixed effects. The odds that students listed a SIG school as their first choice increased by 65 percent in year 2 and 117 percent in year 3. Families observed changes in SIG schools and responded to those changes.

Both California studies point to positive effects of the SIG program in that state, providing evidence that targeted programs aimed at improving low-performing schools can be successful at a relatively large scale. These results, of course, do not imply that all recent school turnaround reforms have been successful. One study in North Carolina finds null results overall and some negative results for student subgroups for a similar approach implemented as part of that state’s Race to the Top program.[ix] California, however, is not a lone case. Recent evidence from Massachusetts also shows positive effects for SIG schools.[x] Unfortunately, national evidence is inconclusive. The largest-scale study of the SIG program, using a sample of 190 SIG schools from 60 districts in 22 states assessed the effects of the reforms using a plausible technique for distinguishing cause, but in practice, the estimates from this study were too imprecise to distinguish whether the SIG schools nationally had a similar effect to the ones in California or had no effect at all.[xi] The small number of schools per state likely led to imprecision in the estimates in the national report. While the study received attention for not being able to rule out that the effects were zero, what was lost in the coverage was that the report was also not able rule out that the results were sizable. For example, the reform would have had to close the gap in math achievement between the students in SIG schools and the average student in the state by a third in the first year of the program and 40 percent by the third year in order to be detected by the study. While aspirational, these benchmarks are also likely to be unrealistic.

With ESSA and the new administration, states will have increased flexibility to address their persistently low-performing schools. As noted, school closures have been a popular policy approach both for charter schools and for traditional public schools, particularly in large urban areas. This approach is not without reason. It is often easier to start an effective school from scratch than to improve one that is badly struggling. But such closures come with costs—political, emotional, and potentially even academic for some students. School turnaround models are often cited for failure.[xii]Nonetheless, recent efforts at school improvement—with aligned goals, strategic staff replacement and substantial funding—have shown promise. In California, we find that SIG schools were able to improve student performance and families’ assessment of the schools and they did this, at least in part, both by improving the composition of the educator workforce through differentially retaining more effective teachers and by improving the professional supports for teachers in the school. As states take over responsibility for addressing their low performing schools, they can draw lessons from these successes.

—Susanna Loeb

Susanna Loeb is the Barnett Family Professor of Education at Stanford University, faculty director of CEPA Labs, and a co-director of Policy Analysis for California Education.

This post originally appeared as part of Evidence Speaks, a weekly series of reports and notes by a standing panel of researchers under the editorship of Russ Whitehurst.

The author(s) were not paid by any entity outside of Brookings to write this particular article and did not receive financial support from or serve in a leadership position with any entity whose political or financial interests could be affected by this article.

Notes:

[i] Wyckoff, Jim (2006). “The Imperative of 480 Schools” Education Finance and Policy, 1(3): 279-287.

[ii] See Bloom, Howard S., and Rebecca Unterman. 2012. Sustained Positive Effects on Graduation Rates Produced by New York City’s Small Public High Schools of Choice. New York: MDRC and Bloom, Howard S., Saskia Levy Thompson, and Rebecca Unterman, with Corinne Herlihy and Collin F. Payne. 2010. Transforming the High School Experience: How New York City’s New Small Schools Are Boosting Student Achievement and Graduation Rates. New York: MDRC.

[iii] Brummet, Quentin (2014). “The effect of school closings on student achievement,” Journal of Public Economics, 119, p. 108-124

[iv] Kirshner, Ben; Pozzoboni, Kristen M (2011). “Student Interpretations of a School Closure: Implications for Student Voice in Equity-Based School Reform,” Teachers College Record, 113 (8): 1633-1667.

[v] See, for example, “As Parents Fight School Closings, D.C. Chancellor Says Input Matters.” Washington Post, January 17, 2008 by V. Dion Haynes and Theola Labbé (http://www.washingtonpost.com/wp-dyn/content/article/2008/01/16/AR2008011604008.html).

[vi] U.S. Department of Education, 2010.

[vii] Dee, Thomas (2012). “School Turnarounds: Evidence from the 2009 Stimulus” NBER Working Paper No. 17990.

[viii] Sun, Min, Penner, Emily, & Loeb, Susanna (Forthcoming). “Resource- and Approach-Driven Multi-Dimensional Change: Three-Year Effects of School Improvement Grants.” American Education Research Journal.

[ix] Heissel, Jennifer & Ladd, Helen F. (2016). “School Turnaround in North Carolina: A Regression Discontinuity Analysis,” CALDER Working Paper 156.

[x] http://www.aypf.org/school-turnaround/collaborating-within-state-agencies-to-ensure-the-effective-use-of-evidence/

[xi] Dragoset, Lisa, Thomas, Jaime, Herrmann, Mariesa, Deke, John James-Burdumy, Susanne, Graczewski, Cheryl, Boyle, Andrea, Upton, Rachel, Tanenbaum, Courtney, & Giffin, Jessica (2017). School Improvement Grants: Implementation and Effectiveness. National Center for Educational Evaluation and Regional Assistance, Institute of Education Sciences. A third study using a different approach and using data only on Texas schools finds mixed results in the first year of implementation including negative impacts on student achievement in elementary and middle school, and positive effects on high school graduation rates. Dickey-Griffith, David. (2013). “Preliminary Effects of the School Improvement Grant Program on Student Achievement in Texas.” The Georgetown Public Policy Review.

[xii] See https://www.washingtonpost.com/opinions/four-decades-of-failed-school-reform/2013/09/27/dc9f2f34-2561-11e3-b75d-5b7f66349852_story.html?utm_term=.ee31ad7af3c4 for one of many examples.