An unabridged version of this article is available here.

An interview with Patrick Wolf about his evaluation of the D.C. Opportunity Scholarship Program and about its likely future is available here.

School choice supporters, including hundreds of private school students in crisp uniforms, filled Washington, D.C.’s Freedom Plaza last May to protest a congressional decision to eliminate the city’s federally funded school voucher program after the next school year (to see additional images of this event please click here). That afternoon, President Obama announced a compromise proposal to grandfather the more than 1,700 students currently in the District of Columbia Opportunity Scholarship Program, funding their vouchers through high school graduation, but denying entry to additional children. Both program supporters and opponents cite evidence from an ongoing congressionally mandated Institute of Education Sciences (IES) evaluation of the program, for which I am principal investigator, to buttress their positions, rendering the evaluation a Rorschach test for one’s ideological position on this fiercely debated issue.

School vouchers provide funds to parents to enable them to enroll their children in private schools and, as a result, are one of the most controversial education reforms in the United States (to see an interview with Patrick Wolf about his evaluation of the D.C. Opportunity Scholarship Program and about its likely future please click here). Among the many points of contention is whether voucher programs in fact improve student achievement. Most evaluations of such programs have found at least some positive achievement effects, but not always for all types of participants and not always in both reading and math. This pattern of results has so far failed to generate a scholarly consensus regarding the beneficial effects of school vouchers on student achievement. The policy and academic communities seek more definitive guidance.

The IES released the third-year impact evaluation of the Opportunity Scholarship Program (OSP) in April 2009. The results showed that students who participated in the program performed at significantly higher levels in reading than the students in an experimental control group. Here are the study findings and my own interpretation of what they mean.

Opportunity Scholarships

Currently, 13 directly funded voucher programs operate in four U.S. cities and six states, serving approximately 65,000 students. Another seven programs indirectly fund private K—12 scholarship organizations through government tax credits to individuals or corporations. About 100,000 students receive school vouchers funded through tax credits. All of the directly funded voucher programs are targeted to students with some educational disadvantage, such as low family income, disability, or status as a foster child.

Nineteen of the 20 school voucher programs in the U.S. are funded by state and local governments. The OSP is the only federal voucher initiative. Established in 2004 as part of compromise legislation that also included new spending on charter and traditional public schools in the District of Columbia, the OSP is a means-tested program. Initial eligibility is limited to K—12 students in D.C. with family incomes at or below 185 percent of the poverty line. Congress has appropriated $14 million annually to the program, enough to support about 1,700 students at the maximum voucher amount of $7,500. The voucher covers most or all of the costs of tuition, transportation, and educational fees at any of the 66 D.C. private schools that have participated in the program. By the spring of 2008, a total of 5,331 eligible students had applied for the limited number of Opportunity Scholarships. Recipients are selected by lottery, with priority given to students applying to the program from public schools deemed in need of improvement (SINI) under No Child Left Behind. Scholars and policymakers have since questioned the extent to which SINI designations accurately signal school quality because they are based on levels of achievement instead of the more informative measure of achievement gains over time.

The third-year impact evaluation tracked the experiences of two cohorts of students. All of the students were attending public schools or were rising kindergartners at the time of application to the program. Cohort 1 consisted of 492 students entering grades 6—12 in 2004. Cohort 2 consisted of 1,816 students entering grades K—12 in 2005. The 2,308 students in the study make it the largest school voucher evaluation in the U.S. to employ the “gold standard” method of random assignment.

Voucher Effects

Researchers over the past decade have focused on evaluating voucher programs using experimental research designs called randomized control trials (RCTs). Such experimental designs are widely used to evaluate the efficacy of medical drugs prior to making such treatments available to the public. With an RCT design, a group of students who all qualify for a voucher program and whose parents are equally motivated to exercise private school choice, participate in a lottery. The students who win the lottery become the “treatment” group. The students who lose the lottery become the “control” group. Since only a voucher offer and mere chance distinguish the treatment students from their control group counterparts, any significant difference in student outcomes for the treatment students can be attributed to the program. Although not all students offered a voucher will use it to enroll in a private school, the data from an RCT can also be used to generate a separate estimate of the effect of voucher use (see sidebar, page 50).

Researchers over the past decade have focused on evaluating voucher programs using experimental research designs called randomized control trials (RCTs). Such experimental designs are widely used to evaluate the efficacy of medical drugs prior to making such treatments available to the public. With an RCT design, a group of students who all qualify for a voucher program and whose parents are equally motivated to exercise private school choice, participate in a lottery. The students who win the lottery become the “treatment” group. The students who lose the lottery become the “control” group. Since only a voucher offer and mere chance distinguish the treatment students from their control group counterparts, any significant difference in student outcomes for the treatment students can be attributed to the program. Although not all students offered a voucher will use it to enroll in a private school, the data from an RCT can also be used to generate a separate estimate of the effect of voucher use (see sidebar, page 50).

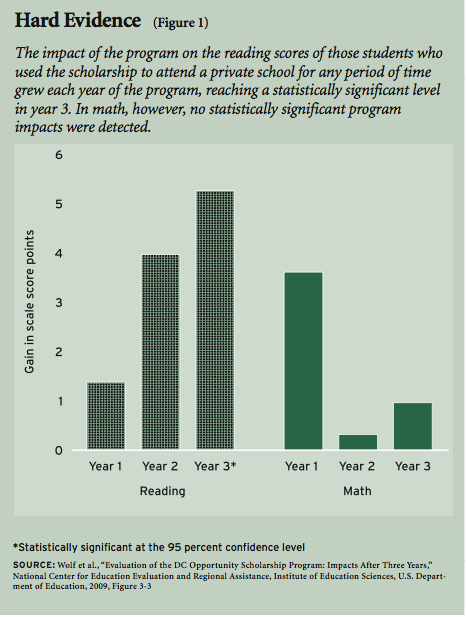

Using an RCT research design, the ongoing IES evaluation found no impacts on student math performance but a statistically significant positive impact of the scholarship program on student reading performance, as measured by the Stanford Achievement Test (SAT 9). The estimated impact of using a scholarship to attend a private school for any length of time during the three-year evaluation period was a gain of 5.3 scale points in reading. That estimate provides the impact on all those who ever attended a private school, whether for one month, three years, or any length of time in between (see Figure 1). Consequently, the estimate should be interpreted as a lower-bound estimate of the three-year impact of attending a private school, because many students who used a scholarship during the three-year period did not remain in private school throughout the entire period. The data indicate that members of the treatment group who were attending private schools in the third year of the evaluation gained an average of 7.1 scale score points in reading from the program.

What do these gains mean for students? They mean that the students in the control group would need to remain in school an extra 3.7 months on average to catch up to the level of reading achievement attained by those who used the scholarship opportunity to attend a private school for any period of time. The catch-up time would have been around 5 months for those in the control group as compared to those who were attending a private school in the third year of the evaluation.

Over time, in my opinion, the effects of the program show a trend toward larger reading gains cumulating for students. Especially when one considers that students who used their scholarship in year 1 needed to adjust to a new and different school environment, the reading impacts of using a scholarship of 1.4 scale score points (not significant) in year 1, 4.0 scale score points (not significant) in year 2, and 5.3 scale score points (significant) in year 3 suggest that students are steadily gaining in reading performance relative to their peers in the control group the longer they make use of the scholarship. No trend in program impacts is evident in math.

What explains the fact that positive impacts have been observed as a result of the OSP in reading but not in math? Paul Peterson and Elena Llaudet of Harvard University, in a nonexperimental evaluation of the effects of school sector on student achievement, suggest that private schools may boost reading scores more than math scores for a number of reasons, including a greater content emphasis on reading, the use of phonics instead of whole-language instruction, and the greater availability of well-trained education content specialists in reading than in math. Any or all of these explanations for a voucher advantage in reading but not in math are plausible and could be behind the pattern of results observed for the D.C. Opportunity Scholarships. The experimental design of the D.C. evaluation, while a methodological strength in many ways, makes it difficult to connect the context of students’ educational experiences with specific outcomes in any reliable way. As a result, one can only speculate as to why voucher gains are clear in reading but not observed in math.

Student Characteristics

The OSP serves a highly disadvantaged group of D.C. students. Descriptive information from the first two annual reports indicates that more than 90 percent of students are African American and 9 percent are Hispanic. Their family incomes averaged less than $20,000 in the year in which they applied for the scholarship.

Overall, participating students were performing well below national norms in reading and math when they applied to the program. For example, the Cohort 1 students had initial reading scores on the SAT-9 that averaged below the 24th National Percentile Rank, meaning that 75 percent of students in their respective grades nationally were performing higher than Chart 1 in reading. In my view, these descriptive data show how means tests and other provisions to target school voucher programs to disadvantaged students serve to minimize the threat of cream-skimming. The OSP reached a population of highly disadvantaged students because it was designed by policymakers to do so.

Did Only Some Students Benefit?

Several commentators have sought to minimize the positive findings of the OSP evaluation by suggesting that only certain subgroups of participants benefited from the program. Martin Carnoy states that “the treated students in Cohort 1 were concentrated in middle schools and the effect on their reading score was significantly higher than for treated students in Cohort 2.” Henry Levin likewise asserts that “the evaluators found that receiving a voucher resulted in no advantage in math or reading test scores for either [low achievers or students from SINI schools].”

The actual results of the evaluation provide no scientific basis for claims that some subgroups of students benefited more in reading from the voucher program than other subgroups. The impact of the program on the reading achievement of Cohort 1 students did not differ by a statistically significant amount from the impact of the program on the reading achievement of Cohort 2 students, Carnoy’s claim notwithstanding. Nor did students with low initial levels of achievement and applicants from SINI schools experience significantly different reading gains from the program than high achievers and non-SINI applicants. The mere fact that statistically significant impacts were observed for a particular subgroup does not mean that impacts for that group are significantly different from those not in the subgroup. For example, Group A and Group B may have experienced roughly similar impacts, but the impact for Group A might have been just large enough for it to be significantly different from zero (or no impact at all), while Group B’s quite similar scores fell just below that threshold.

From a scientific standpoint, three conclusions are valid about the achievement results in reading from the year 3 impact evaluation of the OSP:

- The program improved the reading achievement of the treatment group students overall.

- Overall reading gains from the program were not significantly different across the various subgroups examined.

- Three distinct subgroups of students—those who were not from SINI schools, students scheduled to enter grades K-8 in the fall after application to the program, and students in the higher two-thirds of the performance distribution (whose average reading test scores at baseline were at the 37th percentile nationally)—experienced statistically significant reading impacts from the program when their performance was examined separately. Female students and students in Cohort 1 saw reading gains that were statistically significant with reservations due to the possibility of obtaining false positive results when making comparisons across numerous subgroups.

Why examine and report achievement impacts at the subgroup level, if the evidence indicates only an overall reading gain for the entire sample? The reasons are that Congress mandated an analysis of subgroup impacts, at least for SINI and non-SINI students, and because analyses at the subgroup level might have yielded more conclusive information about disproportionate impacts for certain types of students.

Expanding Choice

The OSP facilitates the enrollment of low-income D.C. students in private schools of their parents’ choosing. It does not guarantee enrollment in a private school, but the $7,500 voucher should make such enrollments relatively common among the students who won the scholarship lottery. The eligible students who lost the scholarship lottery and were assigned to the control group still might attend a private school but they would have to do so by drawing on resources outside of the OSP. At the same time, students in both groups have access to a large number of public charter schools.

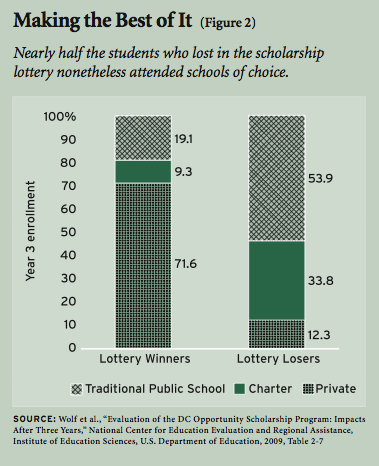

The implication is that, for this evaluation of the OSP, winning the lottery does not necessarily mean private schooling, and losing the lottery does not necessarily mean education in a traditional public school. Members of both groups attended all three types of schools—private, public charter, and traditional public—in year 3 of the voucher experiment, although the proportions that attended each type differed markedly based on whether or not they won the scholarship lottery (see Figure 2). In total, about 81 percent of parents placed their child in a private or public school of choice three years after winning the scholarship lottery, as did 46 percent of those who lost the lottery. The desire for an alternative to a neighborhood public school was strong for the families who applied to the OSP in 2004 and 2005.

These enrollment patterns highlight the fact that the effects of voucher use reported above do not amount to a comparison between “school choice” and “no school choice.” Rather, voucher users are exercising private school choice, while control group members are exercising a small amount of private school choice and a substantial amount of public school choice. The positive impacts on reading achievement observed for voucher users therefore reflect the incremental effect of adding private school choice through the OSP to the existing schooling options for low-income D.C. families.

Parent Satisfaction

Another key measure of school reform initiatives is the perception among parents, who see firsthand the effects of changes in their child’s educational environment. Whenever school choice researchers have asked parents about their satisfaction with schools, those who have been given the chance to select their child’s school have reported much higher levels of satisfaction. The OSP study findings fit this pattern. The proportion of parents who assigned a high grade of A or B to their child’s school was 11 percentile points higher if they were offered a voucher, 12 percentile points higher if their child actually used a scholarship, and 21 points higher if their child was attending a private school in year 3, regardless of whether they were in the treatment group. Parents whose children used an Opportunity Scholarship also expressed greater confidence in their children’s safety in school than parents in the control group.

Additional evidence of parental satisfaction with the OSP comes from the series of focus groups conducted independently of the congressionally mandated evaluation. One parent emphasized the expanded freedom inherent in school choice:

“[The OSP] gives me the choice to, freedom to attend other schools than D.C. public schools….I just didn’t feel that I wanted to put him in D.C. public school and I had the opportunity to take one of the scholarships, so, therefore, I can afford it and I’m glad that I did do that.” (Cohort 1 Elementary School Parent, Spring 2008)

Another parent with two children in the OSP may have hinted at a reason achievement impacts were observed specifically in reading:

“They really excel at this program, `cause I know for a fact they would never have received this kind of education at a public school….I listen to them when they talk, and what they are saying, and they articulate better than I do, and I know it’s because of the school, and I like that about them, and I’m proud of them.” (Cohort 1 Elementary School Parent, Spring 2008)

These parents of OSP students clearly see their families as having benefited from this program.

Previous Voucher Research

The IES evaluation of the DC OSP adds to a growing body of research on means-tested school voucher programs in urban districts across the nation. Experimental evaluations of the achievement impacts of publicly funded voucher and privately funded K—12 scholarship programs have been conducted in Milwaukee, New York City, the District of Columbia, Charlotte, North Carolina, and Dayton, Ohio. Different research teams analyzed the data from New York City (three different teams), Milwaukee (two teams), and Charlotte (two teams). The four studies of Milwaukee’s and Charlotte’s programs reported statistically significant achievement gains overall for the members of the treatment group. The individual studies of the privately funded K—12 scholarship programs in the District of Columbia and Dayton reported overall achievement gains only for the large subgroup of African American students in the program. The three different evaluators of the New York City privately funded scholarship program were split in their assessment of achievement impacts, as two research teams reported no overall test-score effects, but did report achievement gains for African Americans; the third team claimed there were no statistically significant test-score impacts overall or for any subgroup of participants.

The specific patterns of achievement impacts vary across these studies, with some gains emerging quickly, but others, like those in the OSP evaluation, taking at least three years to reach a standard level of statistical significance. Earlier experimental evaluations of voucher programs were somewhat more likely to report achievement gains from the programs in math than in reading—the opposite of what was observed for the OSP. Despite these differences, the bulk of the available, high-quality evidence on school voucher programs suggests that they do yield positive achievement effects for participating students.

Conclusions

School voucher initiatives such as the District of Columbia Opportunity Scholarship Program will remain politically controversial in spite of rigorous evaluations such as this one, showing that parents and students benefited in some ways from the program. Critics will continue to point to the fact that no impacts of the program have been observed in math, or that applicants from SINI schools, who were a service priority, have not demonstrated statistically significant achievement gains at the subgroup level, as reasons to characterize these findings as disappointing. Certainly the results would have been even more encouraging if the high-priority SINI students had shown significant reading gains as a distinct subgroup. Still, in my opinion, the bottom line is that the OSP lottery paid off for those students who won it. On average, participating low-income students are performing better in reading because the federal government decided to launch an experimental school choice program in our nation’s capital.

The achievement results from the D.C. voucher evaluation are also striking when compared to the results from other experimental evaluations of education policies. The National Center for Education Evaluation and Regional Assistance (NCEE) at the IES has sponsored and overseen 11 studies that are RCTs, including the OSP evaluation. Only 3 of the 11 education interventions tested, when subjected to such a rigorous evaluation, have demonstrated statistically significant achievement impacts overall in either reading or math. The reading impact of the D.C. voucher program is the largest achievement impact yet reported in an RCT evaluation overseen by the NCEE. A second program was found to increase reading outcomes by about 40 percent less than the reading gain from the DC OSP. The third intervention was reported to have boosted math achievement by less than half the amount of the reading gain from the D.C. voucher program. Of the remaining eight NCEE-sponsored RCTs, six of them found no statistically significant achievement impacts overall and the other two showed a mix of no impacts and actual achievement losses from their programs. Many of these studies are in their early stages and might report more impressive achievement results in the future. Still, the D.C. voucher program has proven to be the most effective education policy evaluated by the federal government’s official education research arm so far.

The experimental evaluation of the District of Columbia Opportunity Scholarship Program is continuing into its fourth and final year of studying the impacts on students and parents. The final evidence collected from the participants may confirm the accumulation of achievement gains in reading and higher levels of parental satisfaction from the program that were evident after three years, or show that those gains have faded. Uncertainty also surrounds the program itself, as the students who gathered on Freedom Plaza in May currently are only guaranteed one final year in their chosen private schools. What will policymakers see as they continue to consider the results of this evaluation? The educational futures of a group of low-income D.C. schoolchildren hinge on the answer.

Patrick J. Wolf is professor of education reform at the University of Arkansas and principal investigator of the D.C. Opportunity Scholarship Program Impact Evaluation. The opinions expressed in this article are his own.

An unabridged version of this article is available here.

Methodology Notes

If one’s purpose is to evaluate the effects of a specific public policy, such as the District of Columbia Opportunity Scholarship Program (OSP), then the comparison of the average outcomes of the treatment and control groups, regardless of what proportion attended which types of school, is most appropriate. A school voucher program cannot force scholarship recipients to use a voucher, nor can it prevent control-group students from attending private schools at their own expense. A voucher program can only offer students scholarships that they subsequently may or may not use. Nevertheless, the mere offer of a scholarship, in and of itself, clearly has no impact on the educational outcomes of students. A scholarship could only change the future of a student if it were actually used.

Fortunately, statistical techniques are available that produce reliable estimates of the average effect of using a voucher compared to not being offered one and the average effect of attending private school in year 3 of the study with or without a voucher compared to not attending private school. All three effect estimates—treatment vs. control, effect of voucher use, and impact of private schooling—are provided in the longer version of this article (see “Summary of the OSP Evaluation” at www.educationnext.org), so that individual readers can view those outcomes that are most relevant to their considerations.

I have presented mainly the impacts of scholarship use in this essay. Those impacts are computed by taking the average difference between the out comes of the entire treatment and control groups—the pure experimental impact—and adjusting for the fact that some treatment students never used an Opportunity Scholarship. Since nonusers could not have been affected by the voucher, the impact of scholarship use can be computed easily by dividing the pure experimental impact by the proportion of treatment students who used their scholarships, effectively rescaling the impact across scholarship users instead of all treatment students including nonusers. I focus here on scholarship usage because that specific measure of program impact is easily understood, is relevant to policymakers, and preserves the control group as the natural representation of what would have happened to the treatment group absent the program, including the fact that some of them would have attended private school on their own.