State and federal policymakers have embraced the idea that prospective college students need better information on earnings outcomes for individual colleges and programs of study. One of the Obama administration’s signature higher education policy efforts was the new College Scorecard, which provides information on median income after attending a given college. [i] And several states have developed data systems that allow students to obtain this information for individual programs, such as the average earnings of business majors at a particular college.

These efforts are premised on the notion that better data will push institutions to compete in ways that increase quality and lower costs. But will they work in practice? There are some reasons for skepticism. It is difficult to compare the outcomes of colleges and programs that enroll students with widely varying characteristics (especially academic preparation). Further, there is little agreement on how to combine or synthesize different outcome measures. [ii] These are both reasons the Obama administration abandoned its original plan for “ratings” and replaced it with a “scorecard.”

But even if the measures were perfect, the potential for consumer information to change student decisionmaking may still be constrained by the fact that many students only consider institutions near where they live, due to a desire or need to remain close home (e.g., due to work or family obligations). In a new report we released last week, Choice Deserts, we empirically document the extent to which earnings data are likely to be useful to potential students who are comparing different institutions that offer the same program (e.g. bachelor’s degree in business at Virginia Tech vs. at University of Virginia).

We find that only 36 percent of Virginia high school seniors are likely to be able to use program-level earnings data to make a meaningful distinction between programs of study at two or more institutions. The reason is that students’ college choices are constrained by their academic credentials and geographic location, as well as by their personal career interests. This is especially true for students only considering community colleges near where they live, of which only 4 percent can use earnings data to compare programs across colleges.

Comparing outcomes across institutions offering the same program is only one possible use of earnings data. Other possible uses include choosing among majors at a given institution, or deciding whether to go to college at all, especially for students who only have one realistic option (such as the local community college).

But for any potential use, earnings data will only impact decisionmaking if students care about those data and can access and make sense of them. For example, how should a student compare two programs with average earnings among graduates of $45,000 vs. $50,000, but at institutions that have graduation rates of 70 percent vs. 50 percent? And that’s if students can even find the data in the first place, as they are often buried in government websites and efforts to make them more accessible often fall short.

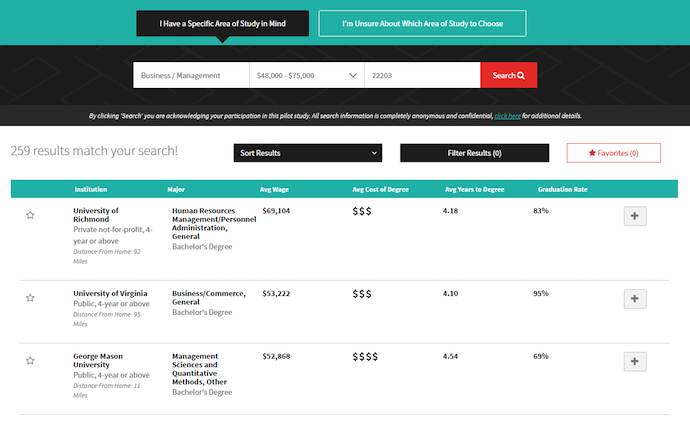

Three recently developed online tools highlight the tradeoffs inherent in trying to bring program-level earnings data to life for potential students. As part of our work in Virginia, we built GradpathVA, a website that allows students to easily compare graduates’ earnings for a given major at different institutions. Our site provides these earnings data alongside other institution-level information such as net price by family income, years to degree, and admissions data.

We built our website with the aim of helping students compare colleges offering a course of study in a given field, as our goal was to understand the extent to which earnings data are useful for this purpose. We erred on the side of a simple presentation of information—focused on comparing earnings and prices within the selected field—which may not be useful for other purposes, such as comparing majors within a selected institution.

Other earnings data websites take a more comprehensive approach at the cost of generating a more complex interface. My Future TX, produced by the research group College Measures, allows Texas students to start from a preferred career, college, or major. The website walks potential students through selecting their own list of colleges and majors, and produces a personalized report with data on average earnings at selected schools one and ten years out from graduation.

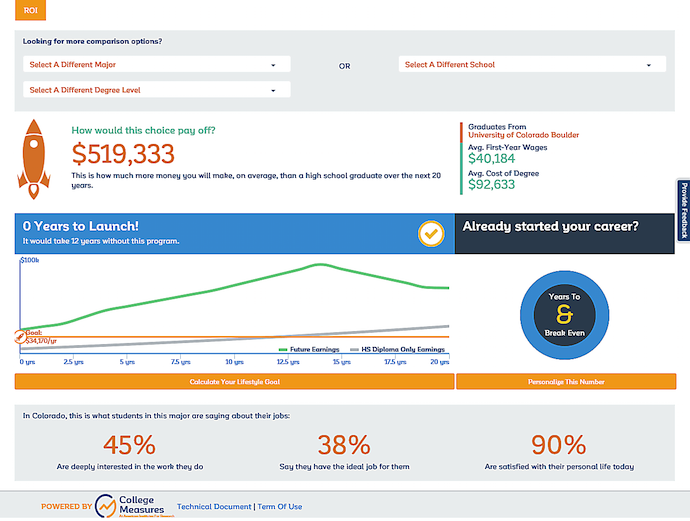

Launch My Career Colorado is another College Measures website that provides a more graphical walk-through format. Once students have settled on a given college and a given major, it produces a return on investment (ROI) calculation, customizable for a student’s “lifestyle goal.” This additional detail comes at the cost of making it more difficult to compare the same program across different institutions.

Other earnings data websites take a more comprehensive approach at the cost of generating a more complex interface. My Future TX, produced by the research group College Measures, allows Texas students to start from a preferred career, college, or major. The website walks potential students through selecting their own list of colleges and majors, and produces a personalized report with data on average earnings at selected schools one and ten years out from graduation.

Launch My Career Colorado is another College Measures website that provides a more graphical walk-through format. Once students have settled on a given college and a given major, it produces a return on investment (ROI) calculation, customizable for a student’s “lifestyle goal.” This additional detail comes at the cost of making it more difficult to compare the same program across different institutions.

— Matthew M. Chingos and Kristin Blagg

Matthew M. Chingos is a Senior Fellow at the Urban Institute. Kristin Blagg is a Research Associate II at the Urban Institute.

This post originally appeared as part of Evidence Speaks, a weekly series of reports and notes by a standing panel of researchers under the editorship of Russ Whitehurst.

Notes: