With both houses of Congress moving apace to reauthorize the Elementary and Secondary Education Act (ESEA), the question is not whether the new legislation will reduce the federal government’s footprint in K-12 education; it assuredly will. The question is whether, in their understandable efforts to rein in Washington’s influence, legislators can preserve those elements of federal policy that stand to benefit students and taxpayers—particularly those that fulfill functions that would otherwise go unaddressed within our multi-layered system of education governance.

One key unresolved issue involves the status of competitive grant programs, through which the Department of Education invites states and school districts to apply for funds to support programs that address federally identified priorities. In the current environment, Congress may be tempted to eschew all programs structured in this way, preferring to rely on formulas to ensure that schools receive their fair share of federal funds. That would be a mistake. Flexible competitive grant programs that encourage innovations in policy and practice and ensure that they are subjected to rigorous evaluation should remain a part of ESEA going forward. In particular, the Investing in Innovation (i3) fund, a program created through the American Reinvestment and Recovery Act that is not a part of the reauthorization bills now moving through Congress, deserves a second look.

Increased reliance on competitive grants has been arguably the defining feature of the Obama administration’s K-12 education policy. Its signature Race to the Top program (RTT) asked states to compete for $4.35 billion in federal grants based on their commitment to implement a 19-item reform agenda. Expansive in its scope, RTT quickly became a symbol of what Senate Health, Education, Labor and Pensions Committee chairman Lamar Alexander has characterized as the Department’s efforts to dictate to states and school districts the details of how best to improve local schools. Congressional discontent with RTT-style policies is not limited to Republicans, however. Most legislators prefer to claim credit for funds allocated by formula rather than risk the ire of constituents whose applications are rejected, and rural members in particular often feel as if their districts are at a disadvantage when funding is competitive. Perhaps because of this discontent, President Obama’s 2016 budget proposal did not include funds for a new RTT competition.

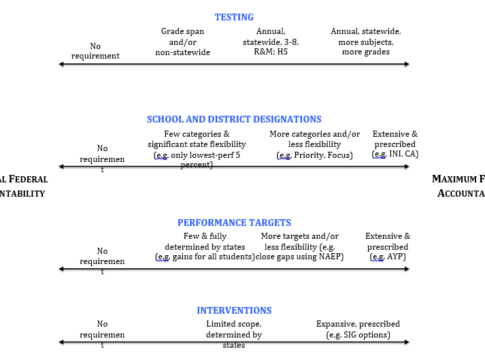

But rather than paint all competitive grants with a broad brush, it is useful to consider differences in their structure. The table below shows that competitive grant programs can vary on at least two dimensions. First, the programs can be broad, aiming to incentivize policy changes in multiple areas in one fell swoop, or narrowly focused on a specific challenge facing most school systems. Second, grants can be awarded based on applicants’ willingness to commit to a detailed set of policy changes and program requirements prescribed by Washington, or they can be awarded based on past success, with funding levels tied to the strength of the evidence the applicant is able to present of their program’s effectiveness.

RTT epitomized the broad, prescriptive approach to competitive grants. Although presented by supporters as an opportunity for states to put forward their best and most innovative ideas, in fact the selection criteria amounted to a detailed list of commitments in areas ranging from state standards and data systems to teacher evaluation systems and strategies to turn around low-performing schools. Because funding was based primarily on future commitments, the program did little to alter the compliance-oriented relationship between federal officials and state and local educators once grants were awarded. As Rick Hess of the American Enterprise Institute has written, “the aftermath entailed years of invasive federal monitoring…during which junior staff at the U.S. Department of Education exerted remarkable influence over the states that received RTT funds.” While it is too soon to know whether states awarded RTT grants will see improvements in student outcomes, there is little hope that their efforts will be a source of rigorous evidence on the merits of specific policies they pursued. The sheer number of policies states were required to implement simultaneously makes it all but impossible to isolate the impact of any one.

Yet RTT was the exception, not the rule. Its scale reflected the unique circumstances of the post-financial crisis stimulus package, and maintaining a single competitive grant program at this scale has already proven to be politically infeasible.

Other competitive grant programs are structured quite differently, with a narrow focus tied to a distinct federal purpose. For example, the Teacher Incentive Fund created by the second Bush administration asks school districts and charter schools to commit to implementing performance-based teacher compensation systems. The rationale is that local officials will be more likely to adopt politically controversial changes to how teachers are compensated when outside resources are available to support their efforts. For the past few years, the Department of Education has also offered grants directly to Charter Management Organizations seeking to expand or replicate high-quality schools. Those schools need not adhere to a particular pedagogical model but must instead document a track record of improving student outcomes. The Teacher Incentive Fund and grants to expand and replicate high-quality charter schools have been included in both the House committee’s bill and in Senator Alexander’s initial discussion draft.

Those bills do not, however, include the Investing in Innovation fund (i3), the second major competitive grant program created through the stimulus package. Initially funded at $650 million, i3 allowed school districts, charter schools, and non-profit organizations working in partnership with one of those entities to apply for grants to support innovative programs aligned with one of four broadly defined federal priorities (e.g., supporting effective teachers and principals or improving the use of data). In other words, i3 was broad in its focus but avoided prescription with respect to the design of the programs eligible for federal support.

The origins and implementation of i3 have been ably chronicled by Ron Haskins and Greg Margolis, who present the program as a cornerstone of the Obama administration’s broader efforts to base spending on social programs on rigorous evidence. Two specific aspects of the design of i3 are especially noteworthy. First, the competition used a tiered evidence model to align the amount of funding a program could receive to the strength of the evidence to support its effectiveness. Second, grant winners were required to conduct rigorous evaluations and were selected in part based on the quality of their proposed evaluation design. Across the first four funding cohorts, i3 supported 53 randomized-control trials—the gold-standard design for evaluations of program effectiveness and one that until recently was virtually unknown in the education sector. (Full disclosure: my primary employer, the Harvard Graduate School of Education, has benefited from i3 as a direct grantee and through evaluation contracts; I am the principal investigator on two of those contracts.)

A competitive grant program that includes these design elements need not be called i3. Indeed, it need not be drafted as a standalone program at all. The Coalition for Evidence-Based Policy has proposed language that would simply allow the Department of Education to reserve up to one percent of funding of all ESEA programs (except Title I) to award grants for innovation and research, with grant amounts based on the tiered evidence model used in i3. The proposal is modeled on the Small Business Innovation Research program under which 11 federal agencies since 1982 have set aside a small percentage of their budgets to award grants to small companies engaged in the development and evaluation of new technologies. As the Coalition notes, both the Government Accountability office and the National Academy of Sciences have offered consistently positive assessments of the program’s success. Importantly, the proposal like SBIR would include small businesses as eligible grantees, addressing a shortcoming of the original i3 program that arguably limited the types of innovations proposed.

The Coalition’s proposal could be strengthened by giving the Institute of Education Sciences the lead role in assessing the strength of applicants’ evidence of effectiveness and in supporting required evaluation activities. The risk with these competitions when carried out by the Office of the Secretary is that they become politicized, that they are judged by review panels without methodological competence, and that they are overseen, once awarded, by career staff in program offices that do not have the background to monitor what is, at root, a program evaluation grant. These risks could be substantially reduced if the competition were funded as a line item in the IES budget, with statutory language requiring that review panels include both practitioners and researchers.

Properly designed competitive grant programs provide an opportunity for Congress to target resources at federal priorities and encourage innovative problem-solving while avoiding federal mandates. They should avoid prescription and both reward and produce rigorous evidence, thus increasing the share of education dollars spent on evidence-based programs while at the same time fulfilling the federal government’s unique responsibility for producing and disseminating high-quality evidence on the best ways to improve American schools. The i3 program was a promising step in this direction. It would be unfortunate if Congress were to miss the opportunity to make something similar a permanent feature of ESEA.

—Martin R. West

This post originally appeared on the Brown Center Chalkboard